AuthorNicole ArchivesCategories |

Back to Blog

Js Webscraper11/8/2021

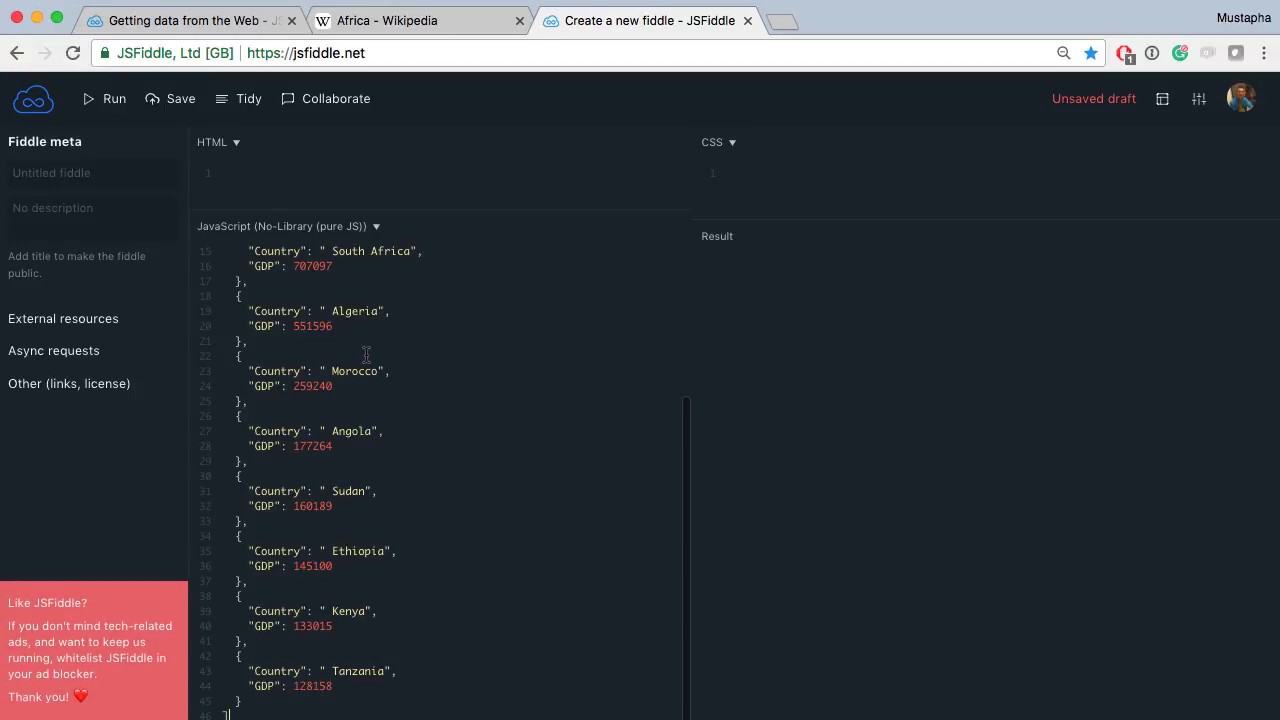

Once the data is scraped, download it as a CSV file that can be further imported into Excel, Google Sheets, etc. EDIT Sept 2021: phantomjs isn't maintained any more, eitherAdditionally, it is possible to completely automate data extraction in Web Scraper Cloud. Prerequisites: Know a little bit about javascript and of course, understand HTML and CSS.

Js Webscraper Update Add LinuxIf you're doing this the first time it will take a while for it to fetch the latest selenium/standalone-chrome and the build your scraper image as well.Once it's done, you can check that your containers are running with docker ps and also check that the name of the selenium container matches that of the environment variable that we passed to our scraper container (here, it was SELENIUM_LOCATION=samplecrawler_selenium_1). Create your Dockerfile from a lightweight image (I'm using python Alpine here), copy your project directory to it, install requirements: # Use an official Python runtime as a parent image# install some packages necessary to scrapy and then curl because it's handy for debuggingRUN apk -update add linux-headers libffi-dev openssl-dev build-base libxslt-dev libxml2-dev curl python-devAnd finally bring it all together in docker-compose.yaml: version: '2'- SELENIUM_LOCATION=samplecrawler_selenium_1# use this command to keep the container runningRun docker-compose up -d.

Js Webscraper Download It As

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed